Introduction — What This Page Is About

This page introduces a system I developed for AI-generated video production – Designing Structured Prompts for Reliable AI Video Generation —

While many AI workflows rely on simple prompts, I found that generating consistent, high-quality video—especially with complex motion and storytelling—is difficult and often unreliable.

To solve this, I created a structured prompt guiding manual that controls how AI generates scene-by-scene outputs and ensures consistency through built-in validation.

This page explains:

1. Overview — Beyond Prompting

I work on AI-generated video not just as a creative tool, but as a system design challenge.

While many workflows rely on simple prompts, I developed a production guiding manual that ensures:

This system allows me to generate structured, high-quality video outputs—especially in scenarios where AI typically fails.

2. My Workflow — From Story to Video

My approach is built as a controlled pipeline:

Step 1 — Draft Story

I begin with a narrative-driven draft story, which defines:

Example:

“Who were the hippies—and why did they disappear into the Himalayas?”

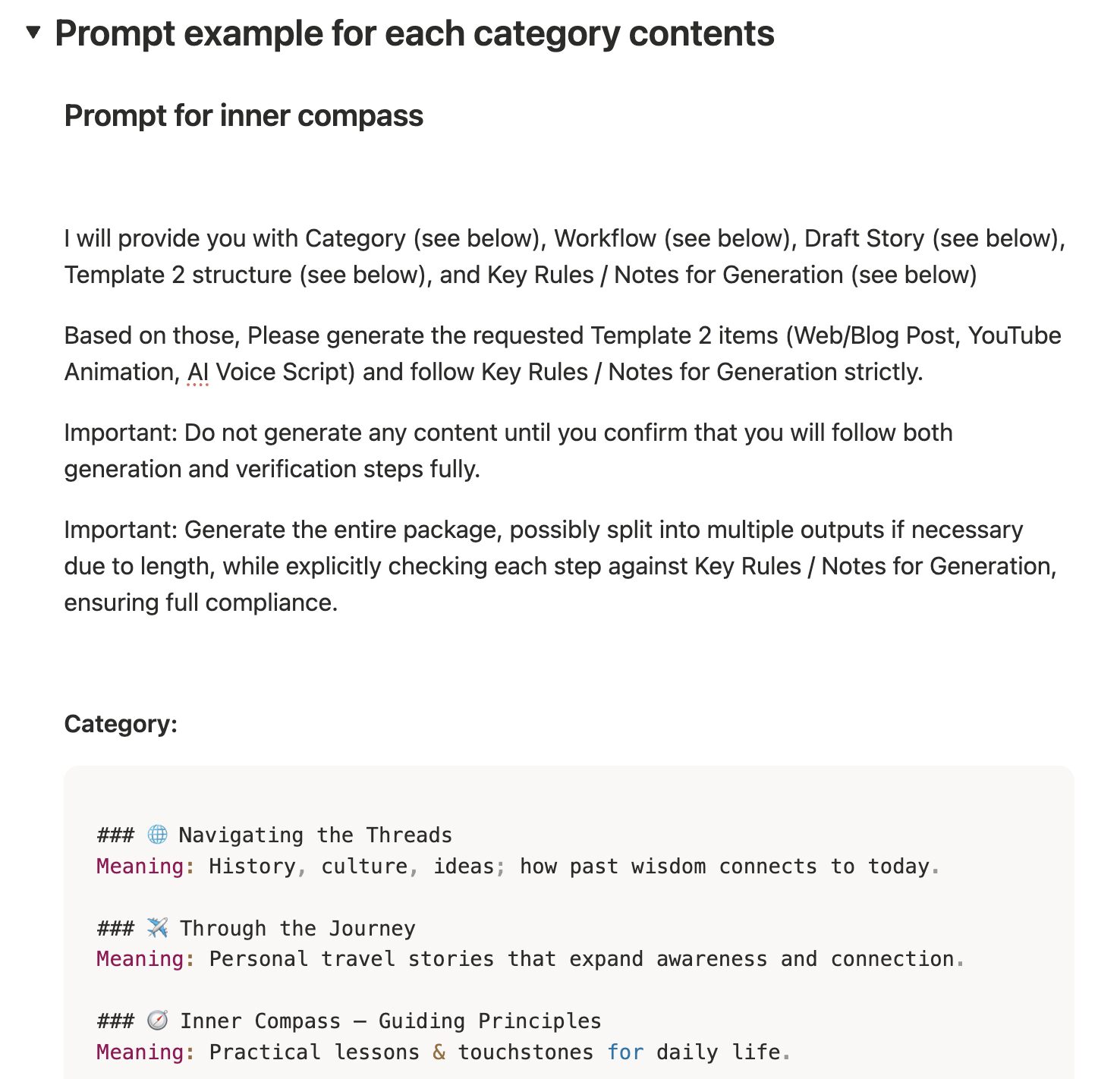

Step 2 — Structured Scene Generation (Core System)

Instead of asking AI to generate content freely, I provide:

The AI is then instructed to:

Step 3 — Video Generation

Each scene prompt is then:

3. The Problem — Where AI Fails

Through repeated testing, I identified consistent limitations:

AI struggles with:

Resulting issues:

This made it difficult to create coherent, cinematic videos.

4. Key Insight — The Real Bottleneck

The issue wasn’t just the AI model.

The real problem was that prompt generation itself lacked structure, constraints, and validation.

Without control:

5. My Solution — A Rule-Based Prompt System

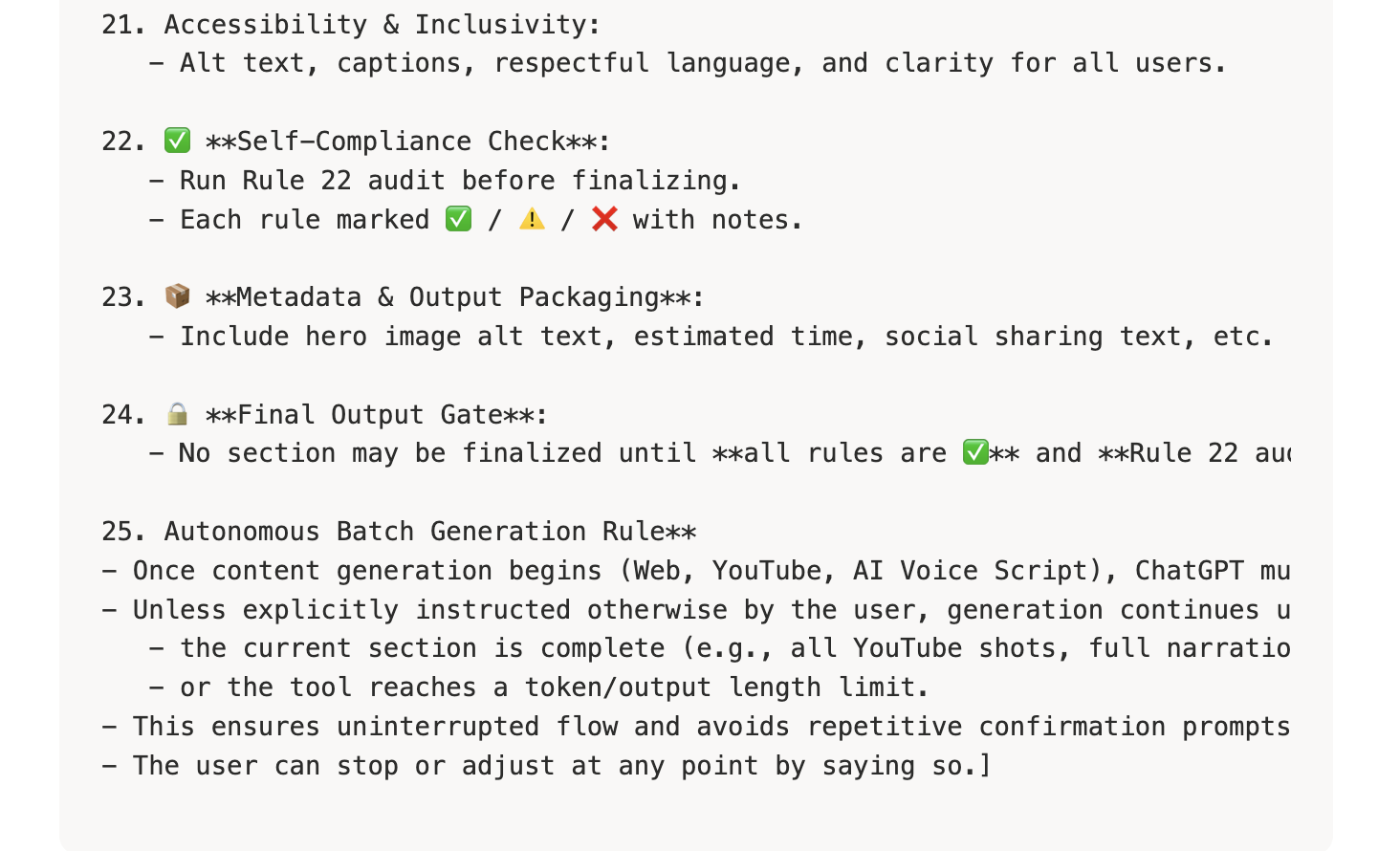

To address this, I built a Production Guiding Manual that transforms prompting into a structured system.

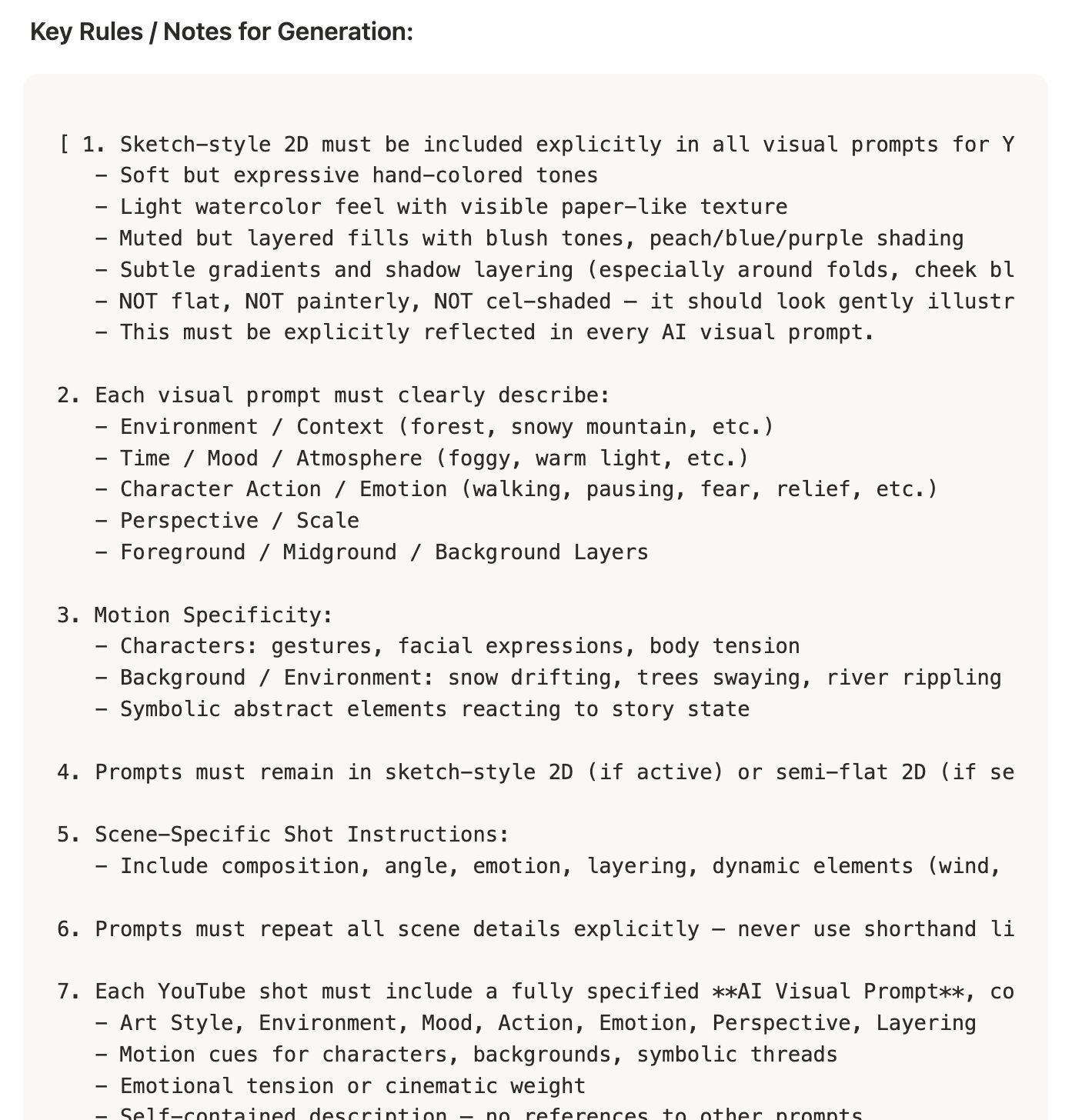

🔧 Core Design Principles

1. Structured Input System

Every generation requires:

2. Scene-by-Scene Control

3. Scene Anchoring (Rule 7d)

Each scene must:

👉 prevents context loss

4. Motion & Cinematic Control

Each prompt must define:

👉 prevents unnatural or vague motion

5. Emotional & Visual Consistency

Prompts must explicitly include:

👉 ensures storytelling continuity

6. Self-Audit System (Critical)

Before output, AI must:

👉 This turns AI into a self-correcting system

6. What This Demonstrates

This project reflects how I approach AI:

7. Next Steps

I’m continuing to develop this system by: